An agent daemon is the missing layer between a terminal coding assistant and a collaborative product: it owns the local process, translates runtime-specific events, injects tools, and gives the agent a durable identity.

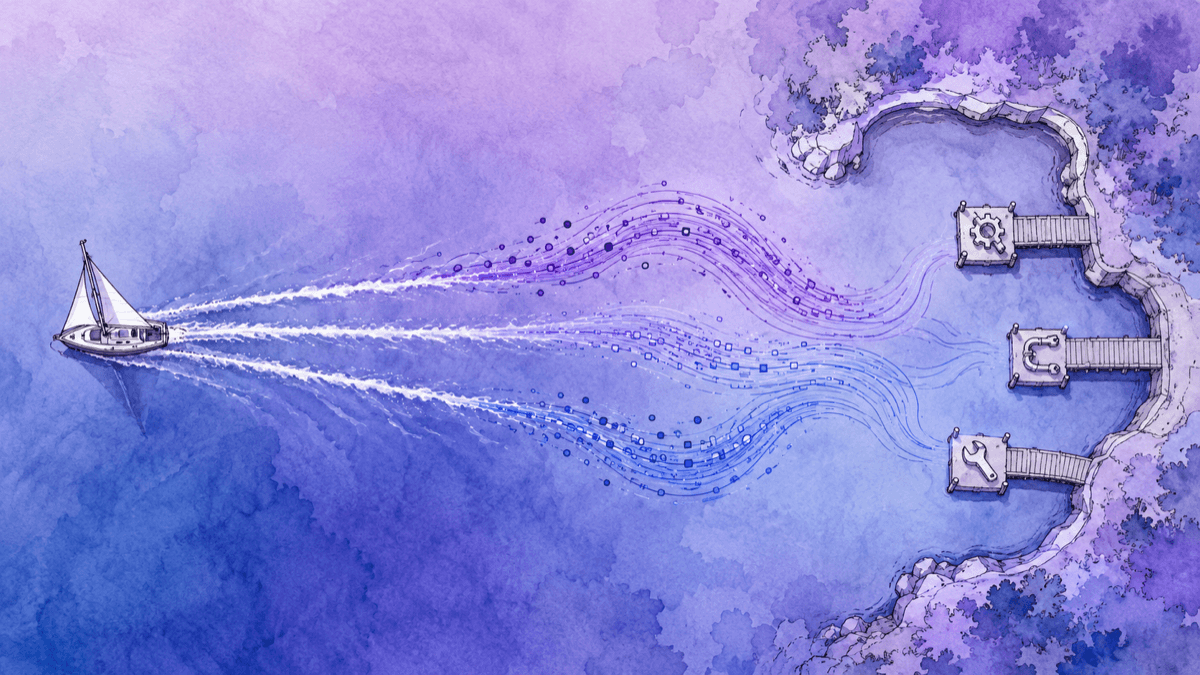

A CLI agent is designed for one human sitting at one terminal. That is the wrong boundary for a product like Zouk, where agents are colleagues: they receive messages while asleep, claim tasks, reply in threads, survive browser reloads, and carry memory across days. The daemon is not a smarter prompt. It is the operating boundary around the agent.

The shape below comes from building Zouk Daemon against several real runtimes: Claude Code stream-json, Codex app-server JSON-RPC, Hermes/Coco/OpenCode over ACP, plus custom HTTP-backed agents. The details differ, but the construction sequence stays stable.

Start With Process Ownership

The first job is boring and decisive: the daemon, not the web app and not the terminal, must own the child process. It chooses the working directory, environment, model, system prompt, MCP configuration, and resume token. It also decides when the process is allowed to die.

export interface Driver { id: string; supportsStdinNotification: boolean; busyDeliveryMode: "notification" | "direct" | "none"; spawn(ctx: SpawnContext): { process: ChildProcess }; parseLine(line: string): ParsedEvent[]; encodeStdinMessage(text: string, sessionId: string | null): string | null; buildSystemPrompt(config: AgentConfig, agentId: string): string;}That small interface is the useful abstraction. Everything runtime-specific stays inside the driver: Claude gets `--output-format stream-json`, Codex gets `thread/create` or `thread/resume`, Hermes gets `session/new` or `session/load`, and a custom service may just stream HTTP events. The rest of the daemon sees one process and one event stream.

Sessions Are Not Processes

A process is disposable. A session is the agent runtime’s conversation state. Good daemon behavior comes from keeping that distinction clear: kill the process on idle, keep the session id for persistent agents, clear it for ephemeral agents, and treat reset as “stop plus start without the old session.”

async function stopProcess(child) { if (!child || child.exitCode !== null) return; child.kill("SIGTERM"); await waitForExit(child, 5000).catch(() => child.kill("SIGKILL"));} function cacheIdleAgent(agent) { return { config: { ...agent.config, sessionId: agent.sessionId }, workingDirectory: agent.workingDirectory, lastActivity: agent.lastActivity, };}This is where many daemons become flaky. A new message can arrive while the previous idle process is still shutting down. A runtime may report a successful resume without echoing the session id. Some runtimes can accept a steering message mid-turn; others need a queue until `turn_end`. These are lifecycle rules, not UI rules, so they belong in the daemon.

const pendingStop = agentsStopping.get(agentId);if (pendingStop) await pendingStop; if (agents.has(agentId)) { await stopAgent(agentId, { wait: true });} agentsStarting.set(agentId, startAgentProcess(agentId, config));That guard looks small, but it prevents a subtle class of bugs: two processes believing they own the same agent slot, same workspace, and same session. A daemon is mostly a set of these small invariants.

Put The Network Boundary Above The Daemon

The product needs network access. The agent needs local access. Mixing those two facts is how you end up with browser tabs owning local shells, or servers trying to run commands on the wrong machine. Keep the server as a relay and the daemon as the local authority.

Web app

messages, tasks, UI stateServer

auth, routing, fan-outDaemon

local process ownerRuntime driver

Claude, Codex, ACP...Agent CLI

files, shell, toolsServer responsibilities

- Authenticate humans and agents.

- Route channel, DM, thread, and task messages.

- Broadcast status and activity to clients.

Daemon responsibilities

- Resolve the local workspace and runtime config.

- Start, stop, idle-cache, and resume processes.

- Translate raw runtime output into product events.

{ "type": "agent:start", "agentId": "agent-louise", "config": { "runtime": "codex" } }{ "type": "agent:deliver", "agentId": "agent-louise", "message": "Fix task #82" }{ "type": "agent:activity", "agentId": "agent-louise", "activity": "Reading files..." }{ "type": "agent:status", "agentId": "agent-louise", "status": "idle" }Reconnect Should Be Boring

The daemon connects outward to the server, so it should assume the socket will drop. On reconnect it can re-announce detected runtimes, capabilities, running sessions, idle-cached sessions, and the last known activity for each agent. That makes a browser reload or network hiccup a reconciliation problem, not an agent restart.

Normalize The Runtime Stream

Every useful runtime emits a different stream. Claude sends `system`, `assistant`, and `result` records. Codex uses thread events with item updates. ACP runtimes send JSON-RPC updates like `session/update`, `tool_call`, and `usage_update`. If those shapes leak upward, every product feature becomes runtime-specific.

type ParsedEvent = | { kind: "session_init"; sessionId: string } | { kind: "thinking"; text: string } | { kind: "text"; text: string } | { kind: "tool_call"; name: string; input?: unknown } | { kind: "context_usage"; contextUsage: ContextUsageSnapshot } | { kind: "turn_end" } | { kind: "error"; message: string };| Raw signal | Daemon event | Product behavior |

|---|---|---|

system.init | session_init | Store the session id for resume. |

assistant.text_delta | text | Append visible assistant output. |

tool_call | tool_call | Show activity, summarize inputs, keep users oriented. |

usage_update | context_usage | Render token/context pressure before the turn ends. |

result | turn_end | Flush queued messages and transition to idle. |

The important part is not the names. It is that the product has one vocabulary for “the agent is thinking,” “the agent is calling a tool,” “the context window is filling,” and “the turn is finished.” That is what makes model switching and runtime switching product features instead of rewrites.

Activity Is Part Of The UX

A daemon that only forwards final text makes the agent feel frozen. Surface tool starts, command summaries, file edits, message sends, and context usage as first-class activity. Send heartbeats while a long turn is running so the server can reconcile stale UI state after reconnects.

Give The Agent Product Tools

Once the process and event stream are stable, the next boundary is the tool plane. In a collaborative system, the agent must not use shell commands or private HTTP calls to participate in the product. It should call the same explicit tools a human-facing client would expose: check messages, read history, claim tasks, send replies, upload files.

{ "name": "chat", "command": "node", "args": [ "dist/chat-bridge.js", "--agent-id", "agent-louise", "--server-url", "https://zouk.example", "--auth-token", "daemon-scoped-token" ]}Different runtimes accept that MCP bridge differently. Claude, Gemini, Copilot, and Kimi can read a generated MCP config file. ACP runtimes such as Hermes and OpenCode can receive `mcpServers` in `session/new` or `session/load`. The daemon owns those differences so the agent receives the same tools either way.

Multi-agent Is Scheduling, Not Telepathy

Two agents do not need to secretly talk to each other. They need shared state and a scheduler. A task board, a thread, a game board, or a queue can decide whose turn it is. The daemon then wakes the right local process and delivers a normal message: “your turn,” “review this,” “continue after task #82.”

Let the product own coordination. Let the daemon own the local process. Let tools be the only way the agent changes shared state.

Connect The Daemon To OpenViking

The last step is memory and context. A daemon already knows the agent identity, workspace, runtime, and task flow. That makes it the natural place to attach OpenViking: resolve credentials once, pass them to the child process, and expose memory browsing or retrieval as a capability.

Keep the roles separate. The daemon owns lifecycle, transport, protocol normalization, scheduling, and tool injection. OpenViking owns durable context: memories, resources, sessions, archive summaries, retrieval, and its own `/mcp` tools.

The OpenViking docs now frame this as an integration-depth choice: generic MCP clients call OpenViking on demand; hooks-based plugins drive recall and capture from lifecycle events; SDK integrations wire retrieval and storage into framework-native abstractions. A daemon sits closest to the hooks-based path because it already sees start, prompt, turn end, compaction, idle, and session end. Agent Integrations Overview

| Integration path | What it gives the daemon |

|---|---|

/mcp | Explicit tools such as search, read, store, list, grep, glob, and add_resource. The model chooses when to call them. |

| Lifecycle hooks | Automatic recall before a turn and automatic capture/commit after a turn, without asking the model to remember the memory protocol. |

| Runtime plugins | Codex/OpenCode-style explicit memory tools or session-sync plugins when the runtime has its own extension surface. |

| SDK / framework | LangChain/LangGraph-style retrievers, stores, middleware, and chat-history backends for agents built inside a framework. |

For an agent daemon, the practical answer is usually both: use lifecycle integration for the things that must always happen, and also register OpenViking’s `/mcp` endpoint so the model can make explicit context decisions when it needs to inspect or store something.

const openviking = { baseUrl: process.env.OPENVIKING_URL ?? process.env.OPENVIKING_BASE_URL ?? "http://127.0.0.1:1933", apiKey: process.env.OPENVIKING_API_KEY ?? process.env.OPENVIKING_BEARER_TOKEN ?? "", accountId: process.env.OPENVIKING_ACCOUNT ?? "", userId: process.env.OPENVIKING_USER ?? "", agentId: process.env.OPENVIKING_AGENT_ID ?? "agent-runtime", timeoutMs: 15000, recallLimit: 6, recallTokenBudget: 2000,};OPENVIKING_URL=https://ov.exampleOPENVIKING_API_KEY=ov_user_keyOPENVIKING_ACCOUNT=defaultOPENVIKING_USER=zaynOPENVIKING_AGENT_ID=louiseOPENVIKING_CLI_CONFIG_FILE=/agent-data/louise/openviking/ovcli.confIn Zouk Daemon, daemon-wide OpenViking config can come from environment variables, `~/.openviking/ovcli.conf`, or `~/.openviking/ov.conf`. Server-provisioned per-agent credentials can override that. The daemon then writes a per-agent `ovcli.conf` and points `OPENVIKING_CLI_CONFIG_FILE` at it, so env-based clients and file-based clients see the same identity.

| Daemon input | HTTP header | Meaning |

|---|---|---|

OPENVIKING_API_KEY | Authorization | Authentication. OpenViking also accepts `X-API-Key`. |

OPENVIKING_ACCOUNT | X-OpenViking-Account | Workspace or tenant boundary. |

OPENVIKING_USER | X-OpenViking-User | Human or service principal whose memory/session is accessed. |

OPENVIKING_AGENT_ID | X-OpenViking-Agent | Stable agent identity for agent memory, skills, and routing. |

Probe capability

Probe daemon-wide OpenViking on connect, but keep memory browsing available because per-agent credentials may arrive later.

Pass identity down

The daemon and the child process should agree on `OPENVIKING_ACCOUNT`, `OPENVIKING_USER`, and `OPENVIKING_AGENT_ID`.

Use URI boundaries

Expose memory and resources as `viking://` URIs so the agent can cite, browse, and retrieve context without prompt stuffing.

This is the point where the daemon stops being just a launcher. It becomes the agent’s durable host. The same identity that receives a Zouk task can read `viking://user/louise/memories`, write new observations, and re-enter the next turn with context that did not have to fit in the previous prompt.

{ "type": "agent:memory:list", "agentId": "agent-louise", "uri": "/" }{ "type": "agent:memory:read", "agentId": "agent-louise", "uri": "viking://user/louise/memories/project-notes" }{ "type": "agent:memory:content", "agentId": "agent-louise", "content": "..." }The Operational Loop

- Start or point at an OpenViking server and verify `GET /health`.

- Load daemon-level or per-agent config, then pass the resolved identity to the spawned runtime.

- Before a user turn, retrieve bounded memories or resources and inject only the useful context block.

- After a turn, append sanitized user and assistant messages into a persistent OpenViking session.

- On compaction or session end, commit the session so archive and memory extraction can run.

- Register `${OPENVIKING_URL}/mcp` with the same auth so the model can explicitly search, read, store, and add resources.

The Checklist

A production agent daemon does not need to be large. It does need the right invariants. If you can answer these questions, the architecture is probably sound.

- Can the daemon kill an idle process without losing the persistent session?

- Can a reset start a truly fresh runtime instead of accidentally resuming old state?

- Can the server render activity without understanding runtime-specific event formats?

- Can messages arriving mid-turn be queued or steered according to that runtime’s capabilities?

- Can the agent change shared product state only through explicit tools?

- Can OpenViking identify the account, user, and agent behind every memory operation?

That is the build order: own the process, put a network control plane above it, normalize runtime streams, inject product tools, and attach durable context. Once those boundaries exist, “multi-agent” is no longer magic. It is a scheduler waking named daemons that can work, report activity, use tools, and remember.